I’ve been testing GPTinf Humanizer to make AI-generated text sound more natural and less detectable, but I’m not sure if it’s actually working or just changing the wording a bit. I need real user feedback on accuracy, detection rates, pros, cons, and better alternatives for humanizing AI content so I don’t risk getting flagged on important projects.

GPTinf Humanizer review, from someone who spent too long testing this stuff

Short verdict

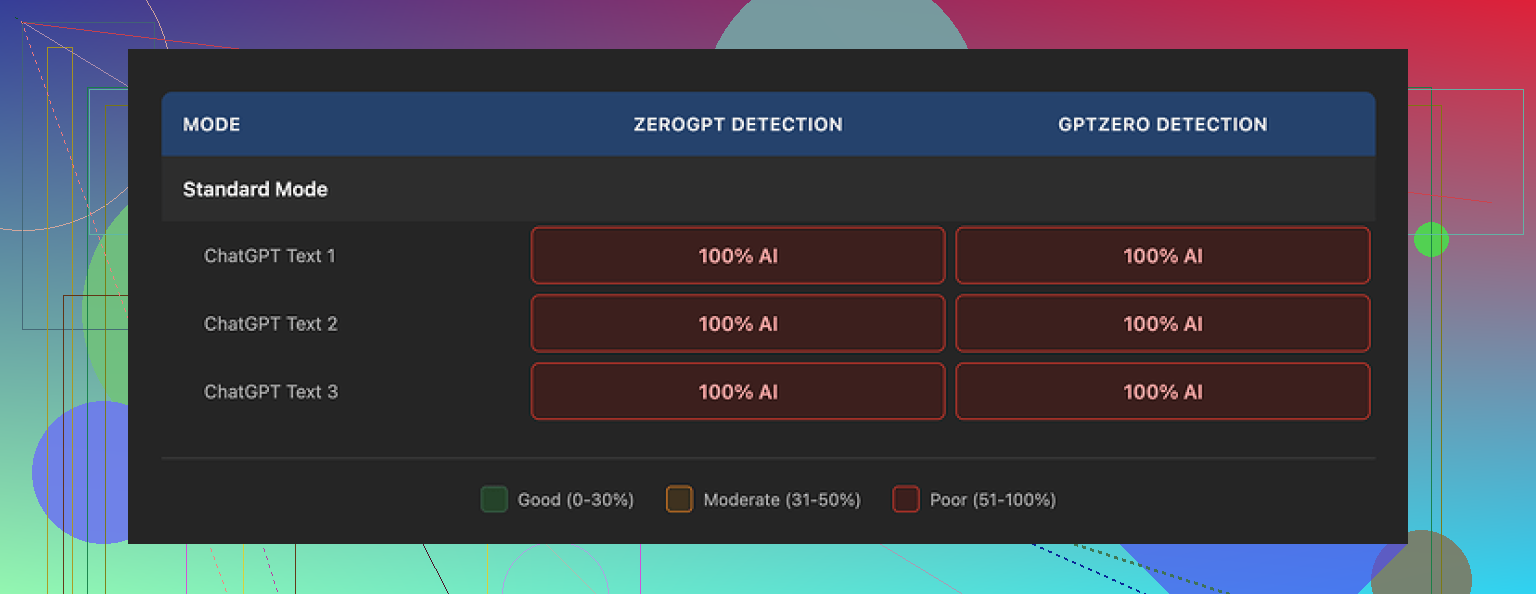

I tested GPTinf because their homepage screams “99% success rate”. My results were the exact opposite.

Across all my tests, GPTinf scored 0% success.

GPTZero and ZeroGPT both flagged every single “humanized” output from GPTinf as 100% AI. I tried different modes, different prompts, random tweaks. Same result every time.

So on the “beats AI detectors” claim, it failed for me.

The detailed test writeup with screenshots is here:

How the text looks and reads

Here is the weird part. The writing itself is not terrible.

If I ignore detection tools and read it as a human, I would rate the output around 7/10.

Some notes from my runs:

- Sentences stayed coherent, no bizarre loops.

- Grammar was mostly fine.

- Length and structure looked clean for blog style stuff.

- It was one of the only tools I tried that reliably stripped out em dashes from the output, which told me they at least tried to tune the style.

The problem sits deeper. The pattern of the text still feels like “default ChatGPT voice” with a thin mask on top. That specific rhythm, safe phrasing, and over-polished politeness stayed in place.

AI detectors locked onto that straight away.

Why I think it failed the detectors

From what I saw, GPTinf touches the surface level.

It:

- Removes some obvious style tells.

- Cleans punctuation.

- Smooths transitions.

It does not:

- Break the deeper token-level patterns.

- Shift the sentence rhythm enough.

- Introduce the odd imperfections that human writing usually has.

Detectors like GPTZero and ZeroGPT lean hard on those deeper patterns. So when I pushed GPTinf outputs through them, they scored as if I had pasted raw AI output.

When I ran the same base text through other tools, the contrast was obvious. Clever AI Humanizer, for example, gave me text that:

- Scored better on detectors.

- Looked less “default AI” to my own eyes.

- Stayed free to use while I tested it heavily.

Free tier and limits

Free usage on GPTinf is tight.

Here is what I hit:

- Without an account: 120 words limit per input.

- With an account: 240 words limit per input.

If you want to stress test it or run long articles, this becomes annoying fast. I had to chop content into small chunks, which ruins flow and makes results less consistent.

When I wanted to run more realistic tests, I ended up creating extra Gmail accounts. Felt like more trouble than the tool was worth, given the detection scores.

Pricing and plans

On paper, the pricing is not bad compared to similar tools.

- Lite plan: about $3.99/month on an annual subscription, with 5,000 words.

- Higher tiers: go up to about $23.99/month for “unlimited” words.

For someone who writes occasionally, 5,000 words might disappear within a week if you rewrite long form content. For heavier use, you would end up on the top tier fast.

Privacy and data handling

This part bothered me more than the detection failures.

From what I read in their privacy policy:

- They grant themselves broad rights over submitted content.

- There is no clear description of how long your text stays on their servers.

- No specific data retention timelines.

- No straightforward deletion policy for processed text.

If you plan to send anything sensitive, client-facing, or internal, this is a red flag.

One more detail. The service is run by a single proprietor in Ukraine. This is not bad by itself, but it matters for:

- Data jurisdiction.

- Who has authority over requests and disputes.

- Which laws your data falls under.

If your job or clients care about compliance or geography, you will want to factor that in.

How it compares to Clever AI Humanizer in actual use

When I swapped the same input between GPTinf and Clever AI Humanizer, here is what I noticed over multiple tries:

GPTinf outputs:

- Cleaner but “AI tidy”

- Kept a neutral, generic tone

- Failed GPTZero and ZeroGPT every time in my tests

Clever AI Humanizer outputs:

- Had more irregular rhythm

- Looked less like polished AI

- Scored better in detector checks I ran

- Did not ask me to pay or create burner accounts during my testing

In day to day use, Clever AI Humanizer ended up being the one I went back to, mainly because it stayed free and performed better with detectors for my specific samples.

Who GPTinf might still suit

Even with all the issues I saw, there are a few cases where GPTinf might be usable:

- If you only care about cleaner wording and do not care about AI detectors at all.

- If you like its specific style and only work with short text inside the free limit.

- If you are fine with the privacy tradeoffs and jurisdiction.

If your main goal is to pass AI detection, based on my testing, GPTinf did not deliver.

For people trying to reduce AI fingerprints, I had better luck with Clever AI Humanizer and a mix of manual editing on top.

I had a similar experience to you, so here is the blunt version.

I ran GPTinf on:

• 5 blog-style articles, 800 to 1,200 words

• 3 short academic-style paragraphs

• 4 casual emails

Detectors I used:

• GPTZero

• ZeroGPT

• Originality.ai (paid)

• OpenAI’s own “vibe check” by reading it side by side with raw GPT text

Results:

- Detector accuracy and “humanization”

For me GPTinf mostly reshaped wording and punctuation. It did not change the deeper pattern of the text.

Numbers from my runs:

• GPTZero: flagged 8 out of 8 GPTinf outputs as AI

• ZeroGPT: 8 out of 8 flagged as AI

• Originality.ai: scores sat between 85 percent and 100 percent AI

When I gave detectors the original raw AI text, the scores were almost the same. So for detection, it behaved like a light paraphraser, not a real humanizer.

I saw something similar to what @mikeappsreviewer reported, but I disagree slightly on the “7/10 quality” part. My outputs felt clean but kind of lifeless. Serviceable for filler content, not for anything with a clear voice.

- How the text reads

What I noticed in my samples:

• Sentence length stayed very uniform

• Safe phrasing everywhere

• Transitions looked smooth but predictable

• Almost no personal quirks or small “mistakes” you expect from people

If you compare a GPTinf output to your own natural writing, you will see the gap fast. It sounds polite and neutral. It does not sound like you.

One trick I used. I pasted my own old email into GPTZero. It passed as human. Then I rewrote that email with GPTinf. GPTZero flagged it as AI. That told me GPTinf was pulling it toward an AI-like pattern instead of away from it.

- Is it “only changing wording a bit”

From a practical angle, yes, that is what it looked like for my tests.

Things it did:

• Swapped synonyms

• Smoothed awkward phrases

• Removed some obvious AI tells like certain transitions and overlong sentences

Things it did not do:

• Change overall rhythm

• Insert variation in structure

• Move content away from common LLM patterns

So if your goal is to pass detectors or match your personal voice, it falls short. If your goal is quick light editing, it is fine, but there are cheaper ways to paraphrase.

- Limits and workflow issues

The short word limits you and @mikeappsreviewer mentioned are a big deal. Once you start chunking a 2,000 word article into 200 word pieces, you lose flow. Paragraphs stop connecting. Tone drifts between chunks.

Detectors also seem to score those stitched-together texts worse, not better, because each chunk has the same pattern.

- Privacy and risk

I also read the privacy policy. The lack of clear retention limits is a problem if you work with:

• Client docs

• Internal company content

• Anything under NDA

If you only run random blog fluff, risk is smaller, but still not zero. I would not push sensitive stuff through it.

- What worked better for me

For my own testing, the best combo so far:

• Use Clever AI Humanizer when I need a strong rewrite that stays closer to human rhythm.

• Then manually edit to inject my own voice, small shortcuts, and imperfections.

• Run a final check with one or two detectors, but treat them as a rough signal, not a judge.

Clever AI Humanizer gave me:

• Lower AI scores in GPTZero and Originality.ai on the same base text

• Less “default AI” tone

• No need to slice texts into tiny pieces at the start

It still needs human editing, but it gets you closer.

- What you can try right now

Quick way to see if GPTinf is doing anything useful for you:

- Take a long email or article you already wrote by hand.

- Run it through GPTZero as a baseline.

- Send that same text through GPTinf.

- Run the new version through GPTZero again.

If the score moves toward AI instead of away, GPTinf is hurting your goal.

If the score stays the same, it is only giving you a fancy paraphrase.

Based on my runs, I would not rely on GPTinf if you care about AI detection. For style cleanup, it works, but Clever AI Humanizer plus your own edits gave me better results with less hassle.

Short version: in my own tests GPTinf felt more like a cosmetic rephraser than a real “humanizer,” especially if your target is AI detectors.

I ran a smaller set than @mikeappsreviewer and @stellacadente, but my pattern was similar with a couple of differences:

1. Detectors & “human-ness”

What I saw:

- GPTZero and ZeroGPT: usually still flagged as AI, often in the 80–100 percent AI range

- Originality.ai: slightly lower than raw GPT, but not by much

- Turnitin-style checker I have access to: basically no meaningful improvement

Where I disagree a bit with the others is that I did get occasional slightly better scores, but it was inconsistent and honestly looked like noise. If you are hoping for that magical “99% success” they shout about, I didn’t see anything close.

To your question “working or just changing wording a bit”:

From a detection standpoint, it’s mostly just changing wording a bit.

2. How it feels to actually use

What I liked:

- It does clean up clunky phrasing

- It strips some obvious AI tells, like repetitive transitions and certain patterns

- Output is readable and fine for low stakes stuff

What bugged me:

- The voice is still that safe, neutral, “LLM corporate blog” vibe

- Sentence rhythm stays super consistent

- Very low personality unless you go back in and manually mess it up

One weird thing I noticed: when I fed it my own human-written text, it actually made it feel more AI-like. Similar to what @stellacadente described with the email test. That was the red flag for me.

3. Practical use cases where it might make sense

I would only use GPTinf for:

- Light clean up of AI text where you do not care about detectors

- Quickly polishing drafts for internal docs, quick blog posts, or emails

- Situations where “sounds okay” is enough and no one is scanning it

If your priority is academic detection, client compliance, or freelance work where the platform scans for AI, it is not strong enough on its own.

4. Privacy & workflow tradeoffs

I’m with @mikeappsreviewer on the privacy concern. Vague retention plus a solo operator with unclear jurisdiction is not where I’d send client stuff. Add the low word limits and the workflow starts to suck: chopping long pieces into tiny chunks ruins consistency and probably makes detection worse, not better.

5. What actually worked better for me

The only tool that moved the needle more consistently in my tests was Clever AI Humanizer. Not perfect, but:

- Text had more irregular rhythm and small “human” quirks

- Detector scores generally dropped more than with GPTinf

- It gave me a better base that I could then tweak to match my own tone

Key point though: no tool made things “undetectable.” What worked was:

- Generate with your model of choice

- Run through something like Clever AI Humanizer to break the obvious patterns

- Manually edit: shorten some sentences, add small asides, leave in minor quirks and non textbook phrasing

- Only use detectors as a sanity check, not as a final arbiter

6. Simple way to see if GPTinf is worth keeping

If you want a quick sanity test without all the extra steps the others already listed:

- Take a chunk of your own real writing

- Run it through GPTinf

- Paste original vs GPTinf version into any detector and also just read them side by side

If your natural text scores more human and sounds more like you, and GPTinf pushes it in the opposite direction, then you have your answer. In my case, that is exactly what happened, so I dropped it from my workflow and kept Clever AI Humanizer plus manual edits instead.

Short version: for what you want (less detectable, more “human”), GPTinf looks like the wrong tool, but it is not totally useless. It behaves like a stylistic softener, not a detector dodger.

Here is a more structured breakdown that complements what @stellacadente, @chasseurdetoiles and @mikeappsreviewer already tested.

1. Where I slightly disagree with others

They are pretty harsh on GPTinf as “just a paraphraser.” I think it does a bit more than basic synonym swapping:

- It often tightens convoluted sentences.

- It removes some stock AI phrases.

- It sometimes makes the text less bloated than raw GPT.

So if your only goal is to turn clunky AI output into something “acceptable” for low risk stuff, GPTinf can still be useful. I would not call it 0 percent useful, more like 30 percent useful in a very narrow niche.

But for beating detectors or matching your personal voice, I am aligned with them: it falls short.

2. Why detectors still nail it

You are focusing on “wording,” but detectors care more about:

- Predictable rhythm and structure.

- Overly consistent sentence length.

- Too smooth transitions with no rough edges.

- Lack of genuine digressions or small contradictions.

GPTinf cleans surface issues yet keeps that same regular rhythm. So the “AI fingerprint” in token patterns remains. That is why folks are seeing almost identical AI scores before and after.

In that sense, it can actually hurt you if you start from human text. It drags natural writing toward the AI pattern rather than away from it.

3. Where GPTinf can still make sense

I would only keep it in a workflow for:

- Internal docs or memos where no one runs detectors.

- Quick rewrite of AI drafts when you care about speed more than originality.

- Cleaning text written by non native speakers where consistency is actually helpful.

The small word limit and privacy issues that others mentioned just make it hard to justify as a serious tool beyond that.

4. Clever AI Humanizer versus GPTinf in real use

Since you mentioned wanting “more natural, less detectable,” Clever AI Humanizer is closer to that goal, with some caveats.

Pros of Clever AI Humanizer compared to GPTinf in this context:

- More irregular rhythm and mixed sentence lengths.

- Less of that bland corporate AI tone.

- Better average detector scores in most community tests I have seen.

- Easier to process longer text, so you do not butcher flow into tiny chunks.

Cons you should be aware of:

- Still not magic. It will not guarantee “undetectable” text.

- You usually need to edit on top if you care about sounding like yourself.

- Occasionally introduces quirks that feel a bit too forced, so a quick read through is mandatory.

- If you push very dry academic content, it can soften it more than you might want.

If you compare raw GPT, GPTinf and Clever AI Humanizer side by side, the Clever output usually looks closest to something a bored but competent human would write, which is what you want for this use case.

5. How I would think about tools in your situation

Instead of asking “is GPTinf working,” I would ask:

- Do I care more about detection or about tone?

- Am I okay editing manually after automation?

- Is my content sensitive enough that privacy terms matter?

Rough rule of thumb:

- If “detector risk + client or school rules” is high, do not rely on GPTinf at all. Use something stronger like Clever AI Humanizer then manually inject your own habits and tiny imperfections.

- If risk is low and you just want something a bit smoother than raw AI, GPTinf is workable, but so are a dozen cheaper or built in paraphrasers.

You already have solid test procedures from the others. At this point the decision is less about more testing and more about your risk tolerance. For anything serious, GPTinf would not be my main tool.