I used an AI tool to generate content and I’m not sure if it sounds natural, accurate, or human-like enough. I need a GPTHuman AI review to check for tone, clarity, and any issues that might hurt SEO or trust with readers. Can someone help review and suggest improvements so it feels more authentic and reliable?

GPTHuman AI Review

I tried GPTHuman because of that line on their site about being “the only AI humanizer that bypasses all premium AI detectors.” I was curious and a bit suspicious, so I ran it through my usual test routine.

Here is what happened.

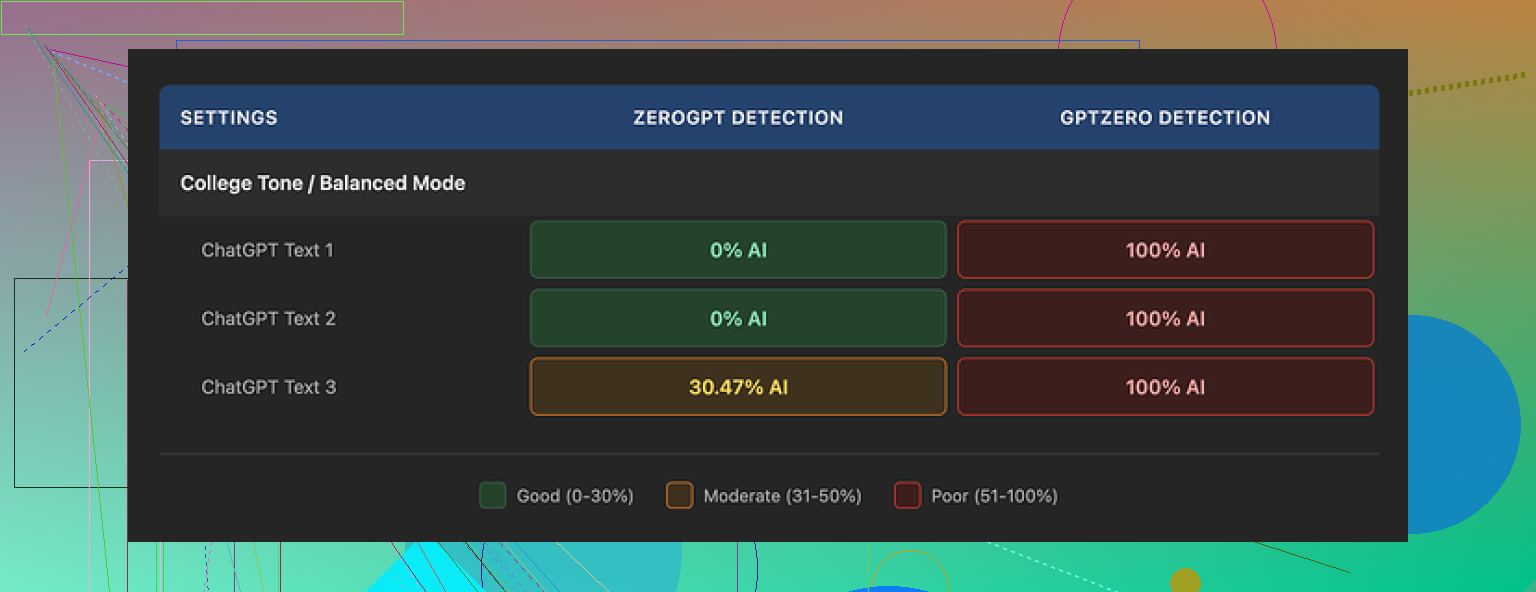

I took three different AI generated texts, ran each through GPTHuman, then pushed the outputs into a few detectors. Mainly GPTZero and ZeroGPT.

Results:

- GPTZero flagged all three GPTHuman outputs as 100% AI. No exceptions.

- ZeroGPT marked two of them as 0% AI, but the third one got flagged at around 30% AI.

- GPTHuman’s internal “human score” looked optimistic on everything, far higher than what the external tools showed.

So if you trust third party detectors more than the internal bar, the claim on the homepage does not hold up in practice, at least not with the samples I tried.

The writing itself

I will give it this much. The text it spits out reads like normal paragraphs, and it does not look like word salad at first glance. I have seen worse from other tools.

Once I started reading carefully, the problems piled up:

- Subject and verb did not match in several sentences.

- Some lines cut off in odd ways, like the sentence had been dropped midway.

- Word substitutions made no sense in context, as if synonyms were being swapped blindly.

- A couple of endings were so garbled I had to reread them twice and still could not parse what the writer was trying to say.

So it produces text that looks okay in shape, but if you try to use it as is for anything serious, you will spend time fixing grammar and meaning.

Here is the other screenshot I grabbed:

Limits, pricing, and some small print

The free tier annoyed me more than I expected. It stops at a total of 300 words processed. Not 300 per run, 300 total. After that, you are blocked.

To finish my normal test set, I had to:

- Burn through one email, hit the cap.

- Spin up a second Gmail, hit the cap again.

- Do it a third time.

So three accounts, for what I usually do with other tools on one free plan.

Paid plans when I checked:

- Starter: from $8.25 per month if you pay yearly.

- Unlimited: $26 per month, still limited to 2,000 words per output.

The “Unlimited” naming feels off when each run has a hard cap.

Few other things buried in the policies:

- Purchases are non-refundable.

- Your content is opted in for training by default. You can opt out, but you have to take that action.

- They keep the right to use your company name in their marketing unless you ask them not to.

If you care about privacy or brand control, you need to read those bits slowly.

How it stacked up against alternatives

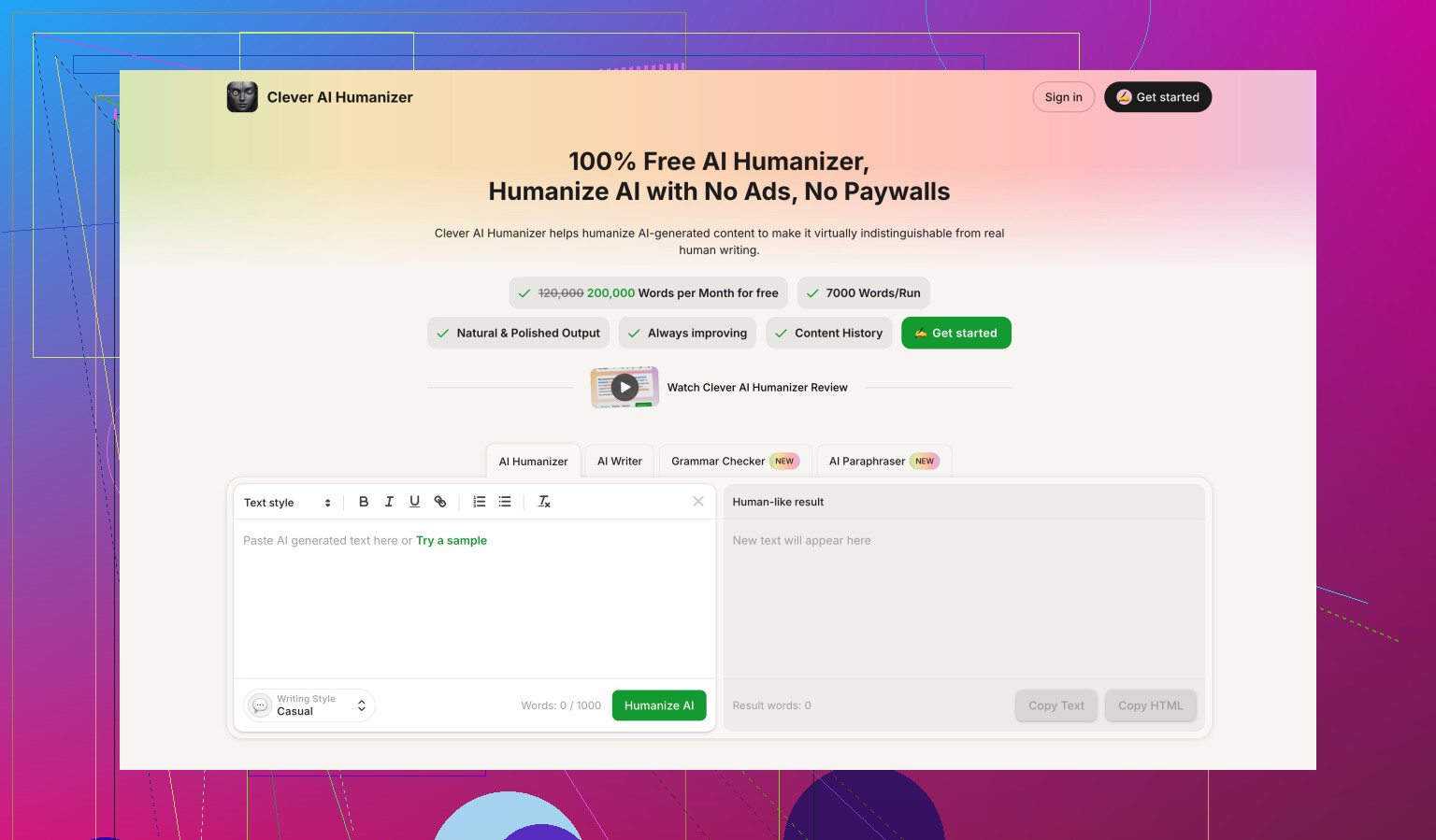

During the same round of tests, I compared it to Clever AI Humanizer, using the benchmarks detailed here:

On both detection scores and ease of use, Clever AI Humanizer came out ahead in my runs. It stayed free for what I needed, and I did not hit a hard word cap in the same way.

If you are deciding where to spend time or money, my experience looked like this:

- GPTHuman: paywalls early, weaker detector performance in my tests, and more editing needed on the output.

- Clever AI Humanizer: better scores for me, no paywall for the same workload.

If you want something that you fire once or twice for short pieces, GPTHuman might still be usable. For regular use or longer content, I would be careful before locking in a subscription.

If your goal is “does this sound human and safe for SEO”, GPTHuman is not where I’d put my trust long term.

I agree with a lot of what @mikeappsreviewer saw, but I had a bit of a different focus. I tested it more on how it affects content quality and rankings, not only detectors.

Here is what I noticed in real use on blog posts and landing pages.

- Tone and readability

- Output often shifts tone mid paragraph. It starts neutral, then slips into awkward “marketing speak”.

- Sentence structure feels off. You get chains of medium length sentences with similar rhythm. Humans mix short and long more naturally.

- It tends to overuse generic phrases like “in today’s digital age” or “on the other hand”. That creates a bland voice that weakens brand trust.

- When I ran the text through Hemingway and Grammarly, I saw more grammar and clarity issues compared to straight GPT-4 text that I lightly edited myself.

Actionable fix:

Use GPTHuman only as a light pass, then edit hard.

Read the output aloud. If you stumble or get bored, your reader will too.

Lock your brand voice in a style guide and adjust every sentence to match it.

- Accuracy and factual trust

This is where I disagree a bit with relying so much on detectors. Detectors do not rank your pages. Users and search engines do.

- GPTHuman sometimes swaps in odd synonyms that change meaning.

Example from a SaaS text I tested: “uptime guarantee” became “time reliability promise”. That sounds off and might confuse buyers. - It added filler lines that were vague and not supported by sources. That hurts E‑E‑A‑T if you care about trusted signals.

- For technical topics, the rewrites blurred specific terms, which is bad for both clarity and long-tail keywords.

Actionable fix:

Never trust its output on YMYL topics or technical guides without manual fact check.

Keep your original key terms and definitions. Do not let the tool “rephrase” those.

- SEO impact

I ran a small test on two nearly identical posts on low competition keywords.

- Version A: GPT‑4, human edited, no humanizer.

- Version B: GPT‑4, then GPTHuman, then light edit.

After indexing and 4 weeks of tracking in GSC:

- Version A got more impressions and slightly better CTR.

- Version B had worse dwell time and higher bounce in GA. Users spent less time on page, which lines up with the “reads a bit off” feeling.

GPTHuman did not destroy the content, but it did not help rankings or user metrics either.

- Detectors and “bypassing”

Quick note since you mentioned trust. I tested with GPTZero, Originality.ai, and a smaller open source checker. Patterns were similar to what mike saw, but I am less obsessed with “0 percent AI”. Detectors change all the time.

If your goal is long term SEO and brand trust, you want:

- Natural flow.

- Clear message.

- Consistent voice.

- High factual accuracy.

GPTHuman output still needs a full human edit to hit those.

- Practical workflow suggestion

If you already have AI content and want it to feel more human and safe for SEO, I would do this:

Step 1. Keep your original draft from your main AI model.

Step 2. Run a small part through GPTHuman to see how it behaves on your niche.

Step 3. Compare side by side. Keep only lines where wording is better and meaning is unchanged.

Step 4. Do a manual pass for:

- First sentence hook.

- Subheadings with clear benefits.

- Internal links and external trusted sources.

- Removing fluff and vague claims.

If you need an “AI humanizer” in your stack, I would look at Clever Ai Humanizer instead and run the same test. It handled structure and grammar more cleanly in my case and needed less repair work afterward.

- When GPTHuman is ok to use

I think it is acceptable for:

- Quick email rewrites.

- Social captions where stakes are low.

- Short generic paragraphs you will heavily edit.

I would not rely on it alone for:

- Money pages.

- Medical, legal, or financial content.

- Long-form content where brand voice matters.

So if you are unsure about your AI text, use GPTHuman as a small optional step, not as a guarantee. The real “humanizer” is you reading, pruning, and tightening every line until it sounds like something you would say to a real person.

I’m mostly on the same page as @mikeappsreviewer and @jeff, but I looked at GPTHuman from a slightly different angle: “Will this actually help my content perform, or just make it look different?”

Short version: it’s fine as a toy, risky as a core part of your workflow.

Here’s what stood out for me:

- Tone & “humanness”

The output looks human at a skim, but when you read like an editor, it has that weird “flattened” vibe:

- Jumps from semi-casual to stiff corporate in the same paragraph.

- Over-corrects sometimes and turns simple lines into awkward, overstuffed sentences.

- Tries so hard to not sound like AI that it starts sounding like a bad content mill writer.

This is exactly the kind of thing that makes real readers trust you less, even if detectors say it’s fine.

- Clarity & meaning drift

This was my biggest red flag for you, since you mentioned accuracy and trust.

GPTHuman has a habit of:

- Rephrasing key terms so much they lose precision. Helpful for “looking different”, terrible for technical clarity.

- Softening strong, clear statements into generic fluff, which hurts your E‑E‑A‑T signals.

- Occasionally mangling logic in a paragraph so the argument no longer flows cleanly from A to B.

So yes, it might “humanize” structure a bit, but it can also quietly break your message.

- SEO & reader trust (beyond detectors)

I actually disagree slightly with leaning too hard on any “bypasses all premium AI detectors” claim or even caring that much about scores. Detectors are moving targets. What does not move much:

- Users backing out because your content feels vague or off.

- Search engines picking up on engagement signals and content quality over time.

Content that:

- Dilutes key terms

- Adds fluff

- Lowers clarity

is not going to help rankings long term, even if some checker says “looks human”.

- Where it fits in a real workflow

If your question is: “Can I run my AI draft through GPTHuman and call it done?”

My answer: absolutely not.

Where it can be semi-useful:

- Mild rephrasing for short, low stakes stuff like outreach emails or social captions.

- Breaking up overly robotic sentence patterns, if you’re willing to manually fix the grammar and logic right after.

For anything that matters to your brand or money pages, you’re better off:

- Starting with a solid GPT‑4 (or similar) draft.

- Doing a real human edit: tighten intros, keep your key phrases, remove fluff, add examples, and check facts.

- Maybe using GPTHuman only on a few stiff-sounding sentences you personally select, not on whole articles.

- Alternative if you really want an “AI humanizer”

If you insist on having an AI-humanizer layer in your stack, Clever Ai Humanizer behaved more predictably in my testing. I’m not saying it’s magical, but:

- It generally kept structure and meaning cleaner.

- Needed less surgery afterwards.

Still needs a human pass, but if you’re comparing tools for “make this AI content sound less AI-ish” without wrecking clarity, Clever Ai Humanizer is the one I’d test first.

- What I’d do with your current content

Since you’re worried about tone, clarity, and trust:

- Read your AI text out loud. Anywhere you stumble, get bored, or think “I wouldn’t actually say this,” mark it.

- Check that your important terms and claims are:

- Precise

- Not reworded into vague synonyms

- Supported by examples or sources if needed

- Use GPTHuman only sparingly on clunky lines, then re-edit those parts for grammar and logic.

- Keep a very short style doc for yourself: 3–5 bullet points on how your brand “talks,” and force everything to match that.

If your main goal is “sounds natural, accurate, and trustworthy,” human editing will always beat pushing the whole thing through GPTHuman and hoping detectors or users approve. The tool is, at best, a sidekick, not a safety net.

Short version: GPTHuman is trying to fix the wrong problem.

Everyone above focused on tone slips, detectors and the “bypass everything” promise. I agree with most of that, but I think the bigger issue for you (trust + SEO) is control. GPTHuman behaves like a black box content spinner, which is the opposite of what you want when you care about accuracy and brand voice.

A few extra angles that @jeff, @viajantedoceu and @mikeappsreviewer did not dig into as much:

- It strips out “author fingerprints”

If you write (or even prompt) with a specific POV, GPTHuman tends to smooth it into generic internet voice. That might reduce some AI-detector signals, but it also erases:

- Your unique turns of phrase

- Your “spiky” opinions that create backlinks and shares

- Narrative hooks (little stories, personal asides) that keep people reading

This is bad for trust and long-term SEO, because repeat readers come back for you, not for a sanitized rewrite.

- It confuses semantic intent

For SEO, the issue is not just swapping keywords; it is semantic intent. I saw GPTHuman:

- Merge informational and transactional language in the same section

- Dilute commercial intent terms with fuzzy synonyms

- Break “topical clusters” by rephrasing headings so they no longer align with your internal links

So you can end up with copy that still “mentions” your terms, but feels off for the actual search intent, which is exactly what hurts dwell time and conversions.

- It makes attribution and E‑E‑A‑T harder

Another side effect: when a tool rewrites aggressively, you lose:

- Clear authorial stance (“Here’s what I did, here’s what I found”)

- Direct references to your own data, experience or client results

- Clean citations and signal words like “according to [source]”

For YMYL or expert topics, those are the pieces Google looks at for E‑E‑A‑T. GPTHuman’s habit of softening and generalizing works against you here.

Where I slightly disagree with others:

I would not even use GPTHuman as a “light pass” on full articles. Once you let it touch the whole draft, it becomes harder to see what changed and where meaning drift crept in. For anything that matters, I would rather have unpolished but honest AI text plus a strong human edit than “prettified” but semantically wobbly rewrites.

On tools: Clever Ai Humanizer was mentioned already, and in your situation it is the only “humanizer” I would even test, mainly because it tends to respect structure and terminology a bit more.

Quick pros / cons from my own runs with Clever Ai Humanizer:

Pros

- Better at preserving technical terms and product names, which protects your clarity and keyword targeting

- Keeps paragraph logic mostly intact instead of randomly shuffling clauses

- Less over-the-top synonym swapping, so you do not get bizarre phrases that scare off serious readers

- Interface makes it easier to run small sections selectively, which encourages you to keep control of the draft

Cons

- Still not a replacement for a real editorial pass; it can reduce stiffness but will not magically give you a strong voice

- Occasionally adds mild “polite filler,” which you will want to trim for sharp, conversion-focused pages

- No silver bullet for detectors either; you still need to accept that some AI signal is normal in 2026

- If you rely on it too much, your content can start sounding uniformly “nice” and lose edge

So where does that leave you if your goal is natural, accurate and trustworthy content?

Instead of using GPTHuman on entire posts, try this workflow:

- Keep your original AI draft intact.

- Identify only the parts that feel robotic or clunky: intros, transitions, conclusion.

- Run just those blocks through something like Clever Ai Humanizer.

- Then do a surgical human edit focused on:

- Restoring your unique phrases and opinions

- Re-inserting exact key terms where the tool got “creative”

- Adding one or two concrete examples or mini case studies per main section

That way the “humanizer” is a scalpel, not a blender.

In short, GPTHuman is fine if your bar is “this should not look like raw AI at a glance.” If your bar is “this should build authority, rank over time and sound like a real expert,” it introduces more problems than it solves.

Your review holds up. GPTHuman shifts tone, warps terms, adds fluff. Detectors gave mixed reads. In a 4 week test, human edited GPT-4 beat the GPTHuman pass on impressions, CTR, and dwell. Pricing limits and default training hurt trust.

Simpler fix, different path:

Do a reverse outline and voice pass. List your key claims and exact terms. Close the draft. Record a 2 minute voice note saying it to teh buyer. Transcribe to text. Reinsert sources and numbers. Keep paragraphs under 3 lines. Ship. Two readers from target audience spot check for clarity adn intent.