Turnitin’s AI checker is… let’s just say ‘new and still finding its groove.’ There’s a lot of skepticism in academia right now because the technology behind AI detection (especially in tools like Turnitin’s) is fundamentally based on pattern recognition—looking at sentence structure, word choices, and statistical quirks that often ‘feel’ AI-like.

Thing is, that stuff is FAR from perfect. Plenty of people—myself included—have gotten flagged for writing totally original content just because maybe we wrote like a robot that day, or used short sentences, or even just summarized research in a weirdly organized way. Honestly, the false positive rate is high enough to cause legit stress among students and educators. You aren’t alone—even published authors and tenured professors have gotten flagged when running their own work!

Reliability? At best, it’s spotty. AI detectors are notorious for sketchy accuracy, especially as bots like ChatGPT and the new Bing get ridiculously good at writing like humans. Plus, the detectors themselves can’t exactly tell your intention; they’re just crunching probabilities. If you got a high AI score, it doesn’t mean you cheated—it just means algorithms think your writing “looks” robotic. But so do a lot of legitimate essays.

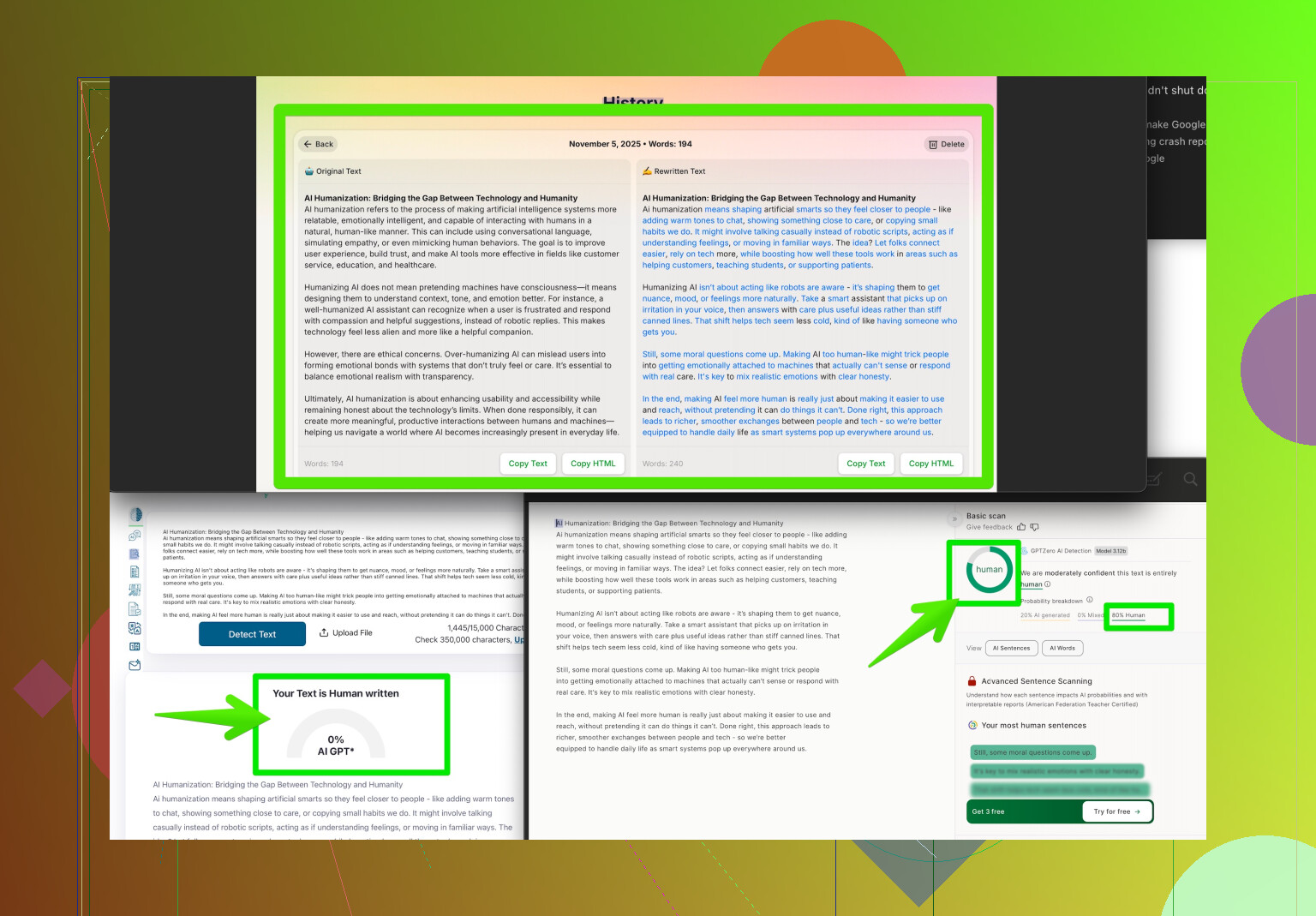

There are ways to decrease this risk. Tools like Clever AI Humanizer are designed to take honest work and rephrase it in a way that scores lower on AI detection tools make your writing sound uniquely human. It’s definitely worth a try if you’re worried about these oversensitive checkers.

Bottom line: Take Turnitin’s AI detector with a big grain of salt. Double check with your instructor if you’re wrongly flagged, and remember—the tech is imperfect, and you don’t have to take its “opinion” as gospel.